Weather resistant or not so much? An objective rating scale to finally end the hype

posted Monday, June 24, 2019 at 5:00 PM EDT

If you've been following Imaging Resource for a while, you'll have seen a number of articles I've written about weather-resistance testing and the R&D process I've been engaged in to develop a repeatable, objective test that relates to actual camera usage. You can read the looong story of how I arrived at the current system and methodology here.

The current system provides for testing at precipitation rates of approximately 1 cm/hour and higher, corresponding to what meteorologists would call "heavy" rain. I'm currently working on an array of custom-made drip nozzles that will deliver much lower precipitation rates in a controlled manner, so we can test and distinguish between the weather resistance of lower-end cameras for which that isn't a primary design objective. After a very long development process and a lot of blind alleys, I've come up with a manufacturing process to make the several hundred nozzles I'll need; now it's just a matter of tweaking the design slightly and actually making all of them. I don't have an ETA on this, but I am hoping to have what I've been calling a Version 1.5 dripper array by late summer or early Fall 2019.

The point of mentioning all this is that I want to be able to assign specific weather-resistance ratings to cameras in a way that will cover a very wide range of cameras, from the most poorly-sealed entry-level consumer camera to incredibly well-sealed pro-level cameras like the Olympus E-M1X. (Olympus tests that model internally with jets of water spraying on the camera from all directions; something far beyond anything a real-world user is likely to encounter.)

I don't plan to go to the extent of forcibly spraying water on cameras, but I do want the scale to cover a very wide range of capability, and I want to be able to start using the scale to express the results of our current testing.

So what would a weather-resistance rating system look like?

How much water?

Obviously, a big part of the equation is how much water a camera is being subjected to. A light mist is one thing, a tropical downpour is another. So the rating system should include a specification of what level of precipitation a camera is being rated for.

Casting about for a way to characterize precipitation levels, I eventually had an "oh duh" moment when I realized that meterologists have a well-established descriptive scale that's been used for years and everyone understands the meaning of. Here's what it looks like, along with the precipitation levels intended for our testing:

|

Test Level |

Precipitation |

Precipitation |

Precipitation |

|---|---|---|---|

| 1 |

"Mist" or Very Light Rain |

No official definition ("Drizzle" technically refers to precipitation with drops <0.5 mm, regardless of rate) |

1 mm/hour / TBD (fine droplets) |

| 2 | Light Rain | < 2.5 mm/hour | 2 mm/hour |

| 3 | Moderate Rain |

2.5 - 7.6 mm/hour (Some scales use 10 mm upper limit) |

5 mm/hour |

| 4 | Heavy Rain | > 7.6 mm/hour |

10 mm/hour (current protocol) |

| 5 | "Downpour" |

No official definition ("Violent" rainfall is >50 mm/hour) |

35 mm/hour / TBD |

(Note that there are other scales used to describe rainfall rates, such as this one. The definitions in the table above seem to be pretty universal though, and are almost certainly what your local weather forecaster means when he or she uses them in their daily forecasts.)

As noted, our current test protocol is at the "heavy rain" level; our system is calibrated to deliver 1 cm/hour of precipitation, plus or minus a few percent, but it's capable of going much higher. There aren't official definitions for "Mist" or what we're calling "Downpour", and it's not even clear whether we'll end up testing at the "Mist" level at this point, anyway. That will depend on the result of experiments with low-end cameras, once the version 1.5 dripper array is online. (Actually, generating a mist might end up being Version 2.0 of the array; Version 1.5-design nozzles are capable of producing a spray of very fine droplets, given the correct pressure and flow rates, but the very low level of overall precipitation we want for the "mist" condition will probably require a separate, special-purpose array of fewer nozzles to be constructed specifically for that usage.)

Meterologists officially recognize only levels 2-4 above; there aren't specific definitions of "mist" or "downpour". "Light rain" is open-ended, ranging from 2.5 mm/hour to zero, and "heavy rain" is the same on the upper end of the scale, with anything over 7.5 mm/hour matching that description. (Most people would recognize "drizzle" as meaning a very light rate of precipitation, but it turns out that the term officially only means precipitation with droplets less than 0.5 mm in diameter, regardless of how much of it there is.)

This leaves a lot of room on either end of the scale for photographically-relevant weather conditions, though. A 2.5 mm/hour rainfall is still what most people would feel was noticeable, but it's possible that we'll find some entry-level cameras that aren't able to tolerate even that amount of precipitation. At the other end of the scale, while we haven't tried really cranking up the precipitation rate beyond the current 1 cm/hour standard, it's pretty clear that cameras like the Olympus E-M1X won't be remotely challenged by that amount of water.

At this point, I'm leaving the specifications of the precipitation rates for "mist" and "downpour" open; the amounts shown in the table above are provisional for now, to be defined by future experimentation. Even if Level 1 never ends up being used in practice, though, I wanted the rating system to allow for it, so any future need would be addressed.

Exposure duration

The second, equally obvious part of the weather-resistance equation is how long the camera is exposed to the weather in question. It was a revelation when I learned in meetings following CP+ 2019 that the universally-used foam gaskets don't actually seal out water, but rather just slow its progress. (!) Compartment doors sometimes use a silicone rubber material for their seals that is in fact completely impervious to water, but most of the sealing between body panels and other components uses a foam material that water actually can penetrate over time. Once the foam gaskets become loaded with water, they'll allow it to pass into the body of the camera, albeit at a slower rate than it would if the gaskets weren't present. (This means that a camera might do just fine in a particular level of rain one day, but then fail completely the next, even with less rain or a shorter exposure. I'm hoping to write an article explaining this, but don't have an ETA on it at this point. It also underscores the value of having a drybox or other means of drying out wet camera gear, yet another article topic I'm hoping to get around to.)

So, bottom line, it's important for our ratings to reference both rainfall rate and exposure duration.

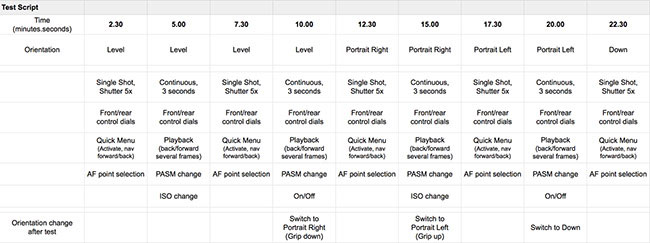

Duration increments

Our standard script for conducting weather-resistance tests specifies a series of control actuations over a 35-minute period, in an attempt to approximate actual usage. In our current "heavy rain" test conditions, we've found that some cameras will fail before we reach the 35-minute point, while others pass that challenge with no problems whatever. For that second group of cameras, we've been letting them sit overnight in the drybox, which more than likely just lets the water seep a bit deeper into the gasket material, rather than drying it out significantly. We then subject them to a doubled test the second day. (That is, on day two, we just run through the 35-minute script twice.)

Any camera fully passing the 35-minute test is pretty well-sealed, but we've already seen cases where one camera might pass the initial 35-minute test but fail at some point during the subsequent 70-minute one. This seems like a worthwhile distinction between cameras, so we'd like to be able to communicate that in our ratings.

So the second part of the rating will reference the number of minutes that a camera survived a given precipitation level. According to the above, the duration ratings will be either 35 or 105 minutes, the latter meaning the camera passed both the first-day and second-day exposure. We'll mention in our review writeups at what point a camera failed, but for the sake of ratings, will separate cameras into categories based on successful completion of either one or three total test cycles. (Given the series of different camera positions and control actuations involved in the test script, we don't think it would be meaningful to rate based on partial cycle completions.)

There's a question of whether two separate exposures are the same as one longer one, but I think the main issue is simply to ensure that all cameras are treated the same. I tend to think that the two-day scenario represents a fairly common use case; think of a patch of rainy weather on a vacation, or soccer finals on a rainy weekend. When it comes to the actual impact of two separate tests vs. one long one, I could construct arguments for either one to be more severe than the other.

On the one hand, any surface water in the battery compartment or elsewhere will readily evaporate overnight, and the foam gaskets might dry out slightly. On the other hand, though, the overnight period might let the moisture trapped in the gaskets migrate further towards the inside of the camera, potentially letting water flow through the gasket more quickly on a subsequent exposure. Whether there's a difference or not, though, it makes sense to me to split the more severe versions of our test across two days, as that clearly represents a category of important use cases.)

Different durations for different precipitation levels?

For the "heavy rain" level at which we're currently testing, total exposure times are 35 and 105 minutes, which seem like they might reasonably represent how long a photographer would be willing to stand out in that much rain. (Especially without feeling that they'd need to protect their camera somehow.)

It's not a cloudburst or tropical storm, but it is a noticeable amount of precipitation. Some photographers would happily put up with it for hours, but I suspect most would be unlikely to stay out in it for more than a half an hour at a time, or at the outside, a couple of hours. On the other hand, even entry-level users might be likely to tolerate mist or light rain conditions for hours.

Having said that, though, are entry-level users likely to engage in hours-long shooting sessions in the first place? I could imagine a parent standing out in a mist or light drizzle to watch and take photos of a child's soccer game, or someone bringing their camera along for a hike in light rain, but in both situations, they probably won't have their cameras out, shooting all of the time.

I'm very interested in hearing readers' feedback on this point: How long are you likely to stand out, actively shooting in light vs. heavy rain? (Or, more to the point, how long do you typically find yourself wanting to actively shoot in inclement weather?) Please leave any thoughts or comments you might have on the topic in the comments below!

What a weather-resistance rating looks like

From the beginning, my intent has been that our ratings would be broadly accessible to end users, far beyond IR's normal online audience. I envision them appearing on product packaging, in brochures for cameras, point-of-purchase displays, etc. My hope is that the existence of the standard we're creating will prod manufacturers to pay more attention to weather resistance and that it will become something photographers can incorporate into their purchase decisions, even if they've never heard of myself or Imaging Resource.

So we needed to come up with a representation of the rating that would be somehow indicative of what it was about, even in parts of the world where English isn't a primary language, and also hopefully be fairly clear in terms of delineating the precipitation level and exposure duration.

Per the discussion above, the basic form will consist of numbers indicating both exposure level and duration, generally looking like "WR Level 4 (105min)"

|

|

|

|---|---|---|

|

We've been through a lot of iterations in trying to come up with an icon for our weather rating; here are three earlier examples. |

||

We're still finalizing the design of the icon that will be used to display the ratings, working with a designer and feedback from some of the camera manufacturers as well. It's taking longer than expected, but I wanted to get this article out now, rather than holding it for the final icon design. (I'll update this part once the design is finalized.) These icons will also appear in the test-results writeups on our site.

We have now settled on the basic elements of the icon design, and the main remaining issue has to do with wording. Initially, the icons would just have "Weather Resistant" in bold letters across the top, but it turns out we need to clearly indicate that the ratings are the result of a third party's evaluation, not that of the camera manufacturers themselves. So we're currently working on how to incorporate the phrase or concept "independently tested" without making the design overly cluttered.

The above shows the design we're homing in on, to give an idea of what we're thinking of. (As with everything else here, I really welcome your feedback on the design of the icon!) The central elements are a field of "raindrops", with an umbrella icon blocking them to imply weather resistance. The five rainfall rates will be represented by different sizes of droplets, ranging from lighter to darker in tone. Light rain will be shown as smaller, lighter-colored drops, while heavy rain will be shown as larger, darker-colored ones. The example above has side by side examples of droplets for all five levels of the rating standard; in an actual rating icon, all the drops will be the same size, corresponding to precipitation rate the camera was tested at, and the text will likely change some, to emphasize that this is an independent rating, not something of the manufacturer's own concocting.

What do you think?

So that's the plan for how we'll present our standardized weather-resistance ratings. As noted, some details are as yet to be determined and the specifics of tests at lower levels of water exposure have yet to be established, but having the framework defined will let us begin assigning ratings to cameras as we proceed with our testing, without restrictions related to how the tests might evolve in the future.

A contest: Help us name the rating scale!

Here's another chance for you to weigh in: Help us name this rating scale! Here's a link to a form where you can enter; the first person who comes up with a name we end up using will get a $100 Amazon gift certificate!

I at one point considered calling it the "Etchells scale", but that seemed a bit self-inflated the more I thought about it. On the other hand, a person's name is a fairly neutral sort of term that's very short and concise, making no effort to incorporate a description of what the scale is about. The winning name would be something short, at most two words plus "scale"; it should be something that rolls off the tongue rather than being a long phrase or sentence. I'd like it to have an identity of its own, apart from Imaging Resource, so would prefer it not include "Imaging Resource" or "IR" in the name.

What do you think? It may be that something like the "Etchells scale" would be fine, but I'd like to hear what people think of that.

So click over to the entry form, for a chance of winning an Amazon gift certificate. (If multiple people suggest the name we end up going with, the first who mentions it will get the prize. If we end up using a name of our own choosing, we'll just put all the entries in a hat and do a random drawing for the prize.)

Oh - and we want to hear as many ideas as possible, so we've given you five spaces for different suggestions. But if you think of an even better one later, you're welcome to submit a whole new entry! :-)

The contest will remain open through the end of this week (Friday, June 28), and we'll announce the winning entry sometime early next week.

By now, I've spent almost as much time thinking and writing about our testing as I've spent doing the testing and writing up the results. We do have results published for the Canon EOS R and Nikon Z7, while the Fujifilm X-T3 writeup is in-progress. We've tested a couple of other models as well, but have decided to repeat the tests to be more certain of the results before publishing them.

At this point, each additional camera tested brings new revelations, so it's important for me to be personally involved, and my time is always waaay oversubscribed. But we're making steady progress, and you'll hopefully be seeing more tests soon, and others on a fairly regular basis.

Meanwhile, very sincere thanks to everyone who's contributed to this project, whether through comments here on the IR site, or in the form of all the hours so many camera company engineers have given so generously to discuss the topic, share their insights and give their feedback on my efforts. Thank you all!