Pocketable portrait photos: Putting the iPhone 7 Plus’ dual-camera “Portrait” mode to the test

posted Tuesday, October 25, 2016 at 3:00 PM EDT

With today's release of iOS 10.1 to the general public, Apple has unlocked the Portrait Mode exclusive to their dual-lens iPhone 7 Plus model. The mode has been in beta testing since September 21 and I have been experimenting with it since receiving my iPhone 7 Plus in early October.

A little bit of background on the portrait mode itself. By leveraging the dual-lens design of the iPhone 7 Plus, the phone mimics the shallow depth of field that is typical of an interchangeable lens camera and large aperture. The effect is achieved by using a built-in image signal processor and then creating a depth map of the scene with the phone’s two cameras. When the depth map is achieved, the subject is kept in focus while the background of the image is blurred. The iPhone 7 Plus employs machine learning to help identify the subject in a scene, such as a person of isolated object.

Per Tech Crunch, the image processor in the Apple iPhone 7 Plus uses technology from LiNx to create a 3D terrain map. The camera’s longer 56mm equivalent lens captures an image and the wide angle lens gathers depth data, which can “generate a 9-layer map.” The two lenses don’t occupy the same physical space, so they capture images from slightly different angles, which allows the phone to create nine slices of depth in the image. After selecting which layers should be sharp, the phone keeps them in focus while applying a circular blur to the rest of the layers. You can read far more about the technology being leveraged in the iPhone 7 Plus’ portrait mode in Tech Crunch’s article here.

How does it function in practice? In my experience, quite well. It really does create a distinct effect that does a pretty good job of mimicking the pleasing bokeh effect of a dedicated camera. When it works, it works well. That qualifying statement, “when it works,” is important though because the mode has some quirks that limit its usability in real world situations.

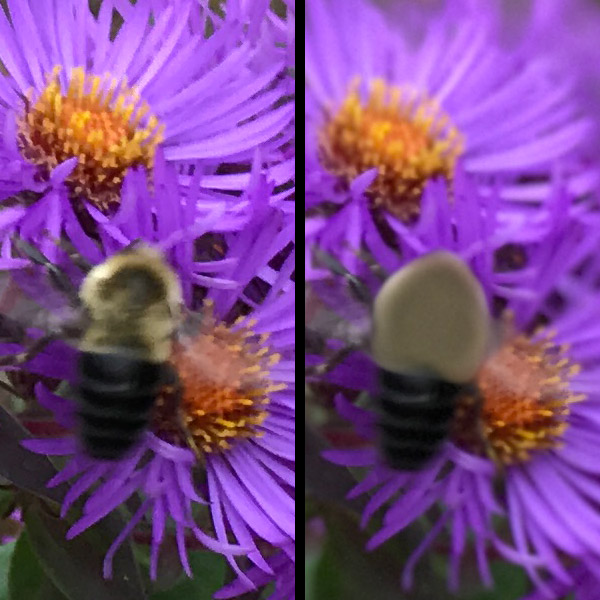

The mode itself is inconsistent to activate. You know that the mode is working when a yellow rectangular text box appears at the bottom of the frame reading “Depth Effect.” You can also see it working on the display in real-time. The issue is that it can come and go without any change in the scene, giving various error messages such as “not enough light” or “the subject is too close.” In my experience, it works consistently well at about five to ten feet, although it can work closer or further away. Regarding the “not enough light” issue, you definitely need more light for the portrait mode to work than you do for regular iPhone photography. Unsurprisingly, moving subjects pose a problem for the mode. Although it can still work, some occasional artifacts can be visible along the edges of a subject, as you can see in the flower shot in this article.

Thanks in part to the portrait mode, the main conclusion for me is that the dual camera feature of the iPhone 7 Plus effectively creates a very real separation between the two iPhone 7 models. Overall, the mode works well overall and I hope that it continues to improve as it is tweaked by Apple, and machine learning is put to good use. You can read more about the portrait mode and the rest of the iPhone 7 and iPhone 7 Plus’ photography features in my upcoming iPhone 7 Plus Field Test.