PASINI REPORTS

Nvision '08 -- Visual Computing Shows Off

By MIKE PASINI

By MIKE PASINI

Editor

The Imaging Resource Digital Photography Newsletter

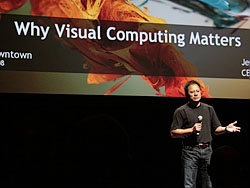

SAN JOSE, Calif. -- You're forgiven for wondering why the world needs another electronics trade show. But if you'd heard Nvidio co-founder and CEO Jen-Hsung Huang explain the genesis of Nvision '08, you'd understand. It's the first trade show for the field of visual computing. With a green scheme, if not a green theme, the show was held Monday through Wednesday this week in San Jose.

We dropped in for a look on Tuesday and found a good bit relevant to photographers from Microsoft's Photosynth and Expression Media to photographer Colin Smith on editing HDR images to the promise of the graphics processing unit, a subject we've been boning up on.

WHY A NEW SHOW | Back to Contents

So why did Nvidia roll out the green carpet at the San Jose Convention Center?

Nvision 08. The fountain was green, the truck was green, there was even green carpet on the sidewalk.

Jen-Hsung told a little story about a conversation he had the day before with fellow keynoter Jeff Han. Han's TED 2006 talk on NYU's interface-free, touch-driven monitor (a bit of which you see today on every iPhone) has become a classic. Jen-Hsung asked Han how he had solved the technical issues he ran into as he developed the multitouch interface. By trolling relevant forums, Han confided. When the team didn't know how to solve a problem, they asked in an engineering forum.

And right there, Jen-Hsung put the resources of Nvidia at Han's disposal. No more forum trolling.

It's the kind of challenge that geeks Jen-Hsung up, he said. A project's value isn't calculated by how many units the company will sell (the projections of which he said are inherently unreliable). It depends on whether it's difficult to solve, whether it's a problem only Nvidia can solve and whether it's useful to solve.

And at Nvision '08, Jen-Hsung hoped to find a few more of those problems.

Jen-Hsung Huang. Geeking up on visual computing.

Every industry, he said, needs a platform for its members "to express themselves." This communication has never been available to the visual computing community. The closest thing to it is Siggraph, the special interest group on graphics and interactive techniques). But Siggraph is really just a place for researchers to get together.

So Nvidia agreed to sponsor the first visual computing trade show where engineers, end users, artists, gamers, scientists, programmers, hardware developers were all in attendance bending each other's ears and joysticks about topics as far ranging as NASA imaging (where pixels can cover a meter or more), faster image manipulation, 3D imaging, video processing, gaming toolkits for movie making and more. The Nvision team calls it a revolution -- and they're just being modest.

Behind this revolution in visual computing is the graphics processing unit, the chip that handles image rendering on your computer system. Like the floating point unit before it, it's been an optional companion to your central processing unit, the CPU that boots your system and executes the instructions your applications dictate to it. And just as the CPU swallowed the FPU, it threatens to swallow the GPU, too. That's an indication of just how indispensable an option the GPU has become, with more and more video RAM devoted to it in higher end systems.

The Stage. The Exhibition Hall stage was booked all day.

But Nvidia believes the GPU deserves a place -- or places -- of its own on the motherboard.

Jen-Hsung remembered approaching OEMs some years ago to ask if they'd be interested in GPU parallel processing. Not one showed any interest. So the company built the capability for themselves, adding it to every GPU they've produced since.

While Intel bangs out more multi-core processors, programmers are finding it hard to tap into their power writing multithreaded applications. It's a little like forming a committee to buy someone a birthday cake. More cores are more members of the committee and deciding who does what and if they did it and what to do while you're waiting for them can be more taxing than someone just biting the bullet and getting the job done themselves.

But Nvidia's CUDA or Computer Unified Device Architecture provides single threaded parallel computing. It's more like a line of people passing a bucket of water from the well to the barn on fire. You'll get more water to the fire passing the bucket than running back and forth.

In fact, you get significantly more water. Tapping into the GPU's ability to process data can make your system run several times faster. On a recent Asian trip, Jen-Hsung met with university professors who had actually built personal supercomputers with multiple NVidia GPUs running CUDA.

With the advent of 3D processing in applications like Photoshop, we started to see the importance of the GPU in photo processing application performance as well. But it's a factor in applying filters, managing layers, rendering slide shows and anything else where those pixels have to be processed. And as we see higher resolution sensors in our cameras and rely more and more on processing Raw data, the data processing issue only escalates.

Consequently, one of the more important decisions you'll make in your next computer purchase is the video card and GPU you buy. And the more video RAM you provide it (or them), the better.

We've seen some exciting applications of GPU co-processing in some unreleased imaging applications already. It's in your future.

Just a week after Microsoft launched a beta of its Photosynth service for Windows, the company was showing it off on the floor of the Exhibition Hall.

Point Cloud. This shot plots the way the computer sees a synth. Each point represents the vertices of a 3D coordinate calculated from the images in the synth.

The service, available only on the Web to computers running Windows XP/Vista, creates what Microsoft calls synths from a collection of related images. Synths are a canvas that display these related 2D images in a 3D scheme after Photosynth analyzes each image to determine how they relate to each other. The analysis, we were told, goes beyond comparing pixels to recognizing features.

The process requires the photographer to take a number of overlapping photographs, each normal scene requiring three overlapping images for a successful analysis. A synth requires from between 20 and 300 overlapping images. But you don't have to include GPS data and you don't have to use a tripod or stand in one place -- or even take them all at the same time.

The next step requires the installation of a Windows-only application on your computer to handle both the uploading of the images to the Microsoft Photosynth server and the synth processing of each image. That saves Windows servers from having to do all the computations but it isn't, apparently, very taxing for your hardware, occurring in the background as uploads are processed.

You can add copyright information to the Exif header of your images and through the server but all uploaded images are publicly available. There are no private synths.

The Web interface lets you create a synth and select the pictures you want to use. People who view your synth can't download the individual images but you can embed a synth on your Web site, blog or any place HTML is understood.

The finished synth displays one image at a time with a ghosted hint of related images that can be scrolled toward.

The synth server was "overwhelmed" while we were at the booth, but the Photosynth site provides an emulation viewable on any platform.

EXPRESSION MEDIA | Back to Contents

While we were at the Microsoft exhibit, we dropped by the Expression demo and asked about Expression Media, the Microsoft successor to the popular iView MediaPro image cataloging application.

Geotagging. Expression Media now does geotagging.

Indeed, it's alive and well. The new version, released about two months ago, didn't seem to offer any compelling new feature, although it did provide a geotagging function and a photo uploader function.

But, we were told, the application has been substantially rewritten from its iView days. The code is a lot more robust and many of the old bugs have been squashed at last.

Our experience with the iView version on the Mac has been solid but we've heard numerous reports of bugs with the iView Windows version.

Raw support is always tricky with third-party applications, so we asked if the two-month old release supported the Nikon D700, for example. We were told that Raw support in Expression Media is provided by the operating system. So the extent Windows or Mac OS X supports the new Raw file format, Expression Media supports it.

What about a brand new format like the Coolpix P6000's NRW format? Expression Media will read the Exif data and if it's in a standard format, it will be able to handle it. NRW not being standard, it appears an OS update will be required to display those previews.

SMITH & HDR PROCESSING | Back to Contents

We caught Colin Smith giving away copies of Photoshop Elements on the main stage of the Exhibition Hall and dropped in to watch him play with HDR or high dynamic range imaging in Photoshop CS3.

He had an interesting twist on it, though, relying on the Photomatix plug-in to map the tones of the blended image. But we're getting ahead of ourselves.

Colin Smith. Wacom at the ready, Smith works on an HDR image.

He starts by taking just three Raw images of a subject, bracketing the exposure from -2 EV to +2 EV with a 0 EV shot in the middle. He's found it necessary to do this handheld these days, he said, alluding to security guards who object to their buildings being subjected to tripod-mounted cameras. But if you're still, Photoshop can blend the images perfectly.

The blended image, opened in Photoshop as a 32-bit channel image, contains far more tones than can be displayed on screen or printed. The advantage is that you can select which tones you want to display without the usual banding between tones you get when you adjust tonality and color on a 24-bit image of 8-bit channels.

In Photoshop, you do this with the Exposure command, but it isn't quite as powerful as Photomatix. If you do use the Exposure command, he pointed out, be sure to use the local adaptation option, the only one that displays an editable curve.

The advantage of the Photomatix plug-in is far more dramatic effects and greater control of the tonal mapping. In his case, this led to an illustration effect that seemed to rely on a simplified palette with greater contrast.

One questioner asked how you can avoid the halo effect of the Phomomatrix rendering. Smith said the trick was to play with the sliders until it disappears. But it takes a good deal more playing than he was able to demonstrate. The halo is hard to avoid.

Someone told Jen-Hsung that he was the nicest house in a lousy neighborhood. That neighborhood is the VGA controller, timing registers telling the display how many lines of resolution to put up in industry-standard formats. That's what a GPU did in the 1980s. But the nice house is the multiple GPUs processing threads of data simultaneously that makes a visual computing industry possible.

The world may be weary of electronics trade shows, but Nvision highlights a hard-working if little appreciated component of our computing lives that promises some startling benefits before they roll out the carpet again next year.